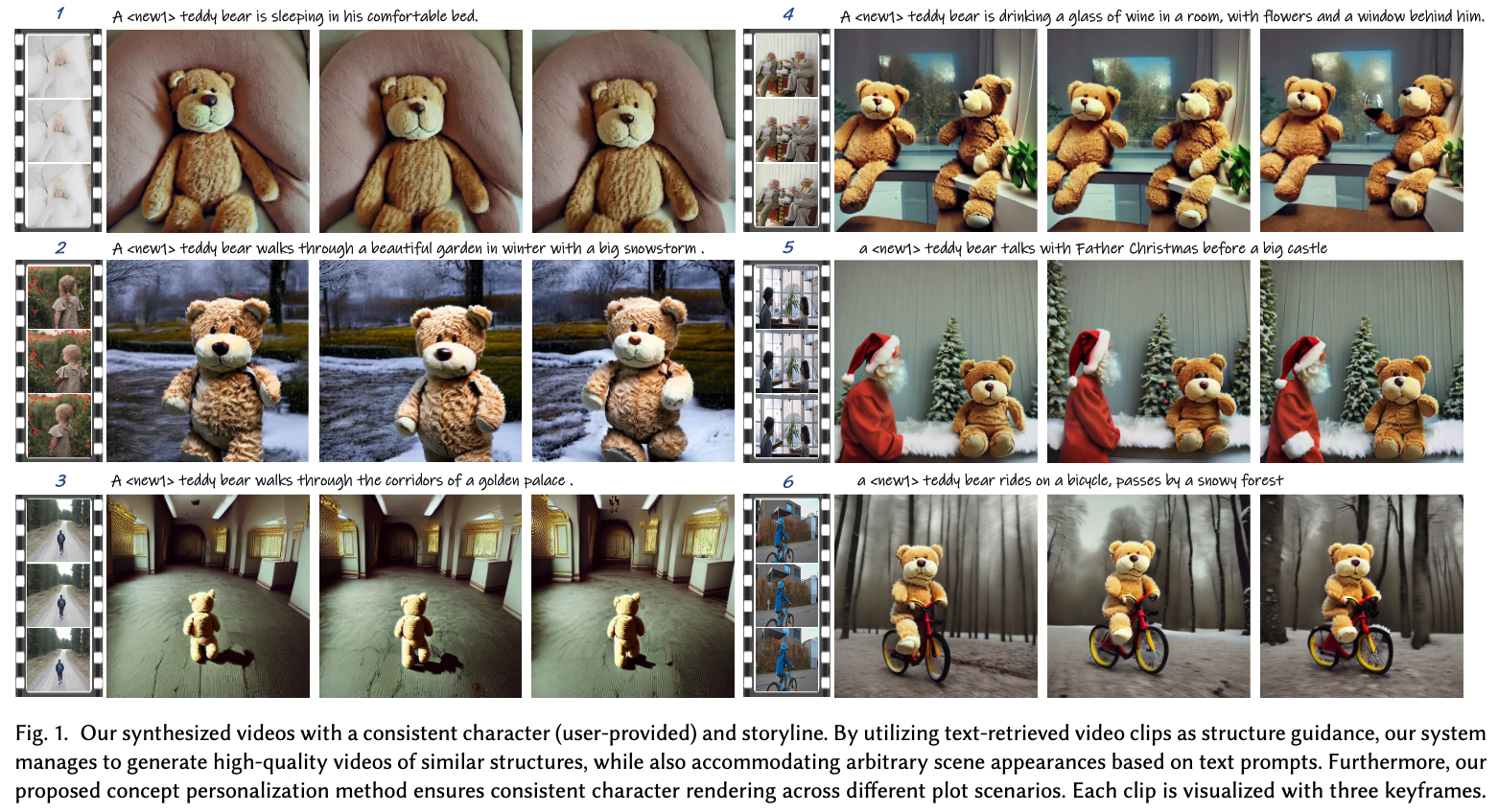

Generating videos for visual storytelling can be a tedious and complex process that typically requires either live-action filming or graphics animation rendering. To bypass these challenges, our key idea is to utilize the abundance of existing video clips and synthesize a coherent storytelling video by customizing their appearances. We achieve this by developing a framework comprised of two functional modules: (i) Motion Structure Retrieval, which provides video candidates with desired scene or motion context described by query texts, and (ii) Structure-Guided Text-to-Video Synthesis, which generates plot-aligned videos under the guidance of motion structure and text prompts. For the first module, we leverage an off-the-shelf video retrieval system and extract video depths as motion structure. For the second module, we propose a controllable video generation model that offers flexible controls over structure and characters. The videos are synthesized by following the structural guidance and appearance instruction. To ensure visual consistency across clips, we propose an effective concept personalization approach, which allows the specification of the desired character identities through text prompts. Our experiments showcase the significant advantages of our proposed methods over various existing baselines. Moreover, user studies on our synthesized storytelling videos demonstrate the effectiveness of our framework and indicate the promising potential for practical applications.

-- Yann Lecun's Journey in China 😉 --

-- A Day of a Teddy Bear 😎 --

-- Duck Kingdom 😝 --

-- The Boy's Quest for Treasure 🦸 --